Researcher & Builder

I design interactive systems at the intersection of AR, spatial computing, and AI — exploring how intelligent, embodied interfaces can reshape the way we create, visualize, and engage with digital content in the real world.

AI clone — it's funny!

Now — what I'm currently working on and recent milestones.

Publications

Peer-reviewed research in AR, spatial computing, and human-computer interaction.

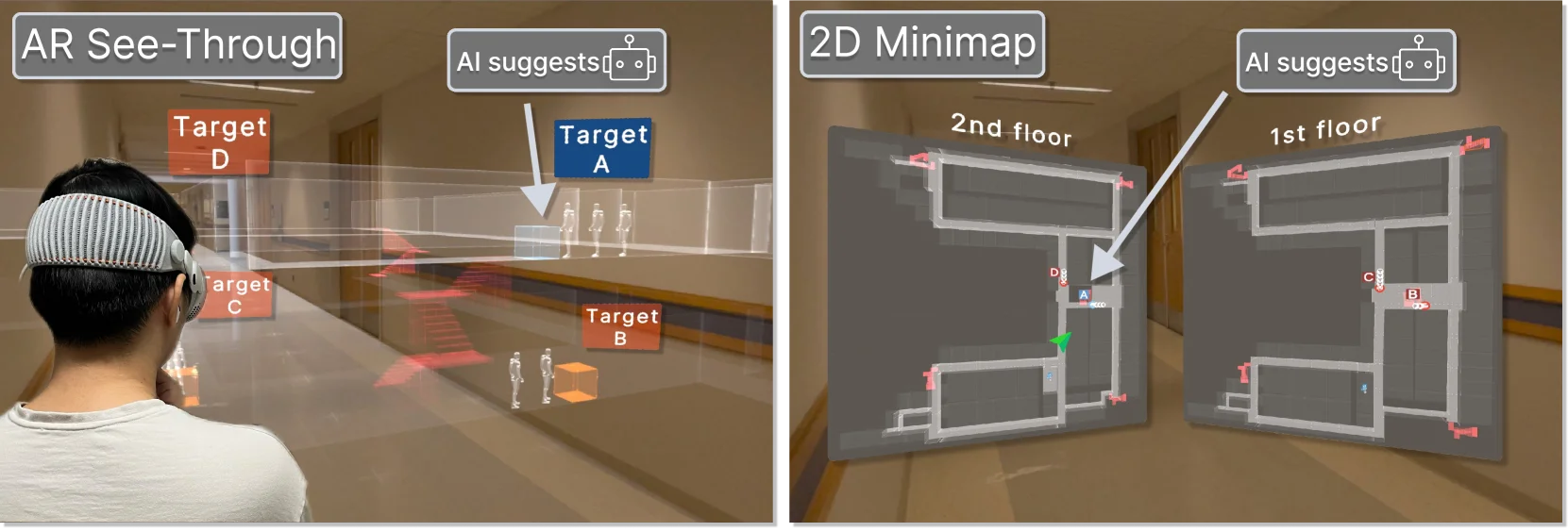

Can AR Embedded Visualizations Foster Appropriate Reliance on AI in Spatial Decision-Making? A Comparative Study of AR X-Ray vs. 2D Minimap

Xianhao Carton Liu, Difan Jia, Tongyu Nie, Evan Suma Rosenberg, Victoria Interrante, Chen Zhu-Tian

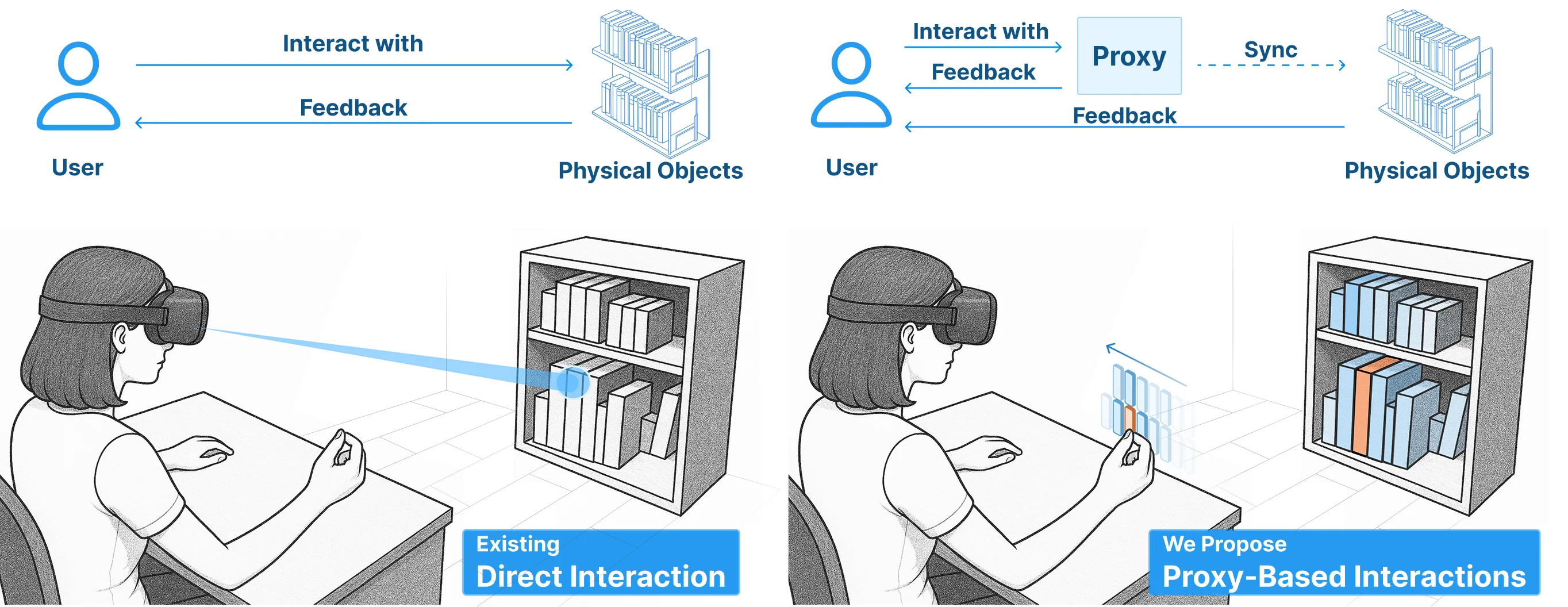

Reality Proxy: Fluid Interactions with Real-World Objects in MR via Abstract Representations

Xiaoan Liu, Difan Jia, Xianhao Carton Liu, Mar Gonzalez-Franco, Chen Zhu-Tian

Projects

Systems built at the intersection of AR, spatial computing, and fabrication.

NoteV

Every AI note-taker can hear. Ours can see — a multimodal AI assistant for Meta Ray-Ban smart glasses that captures and structures your lectures

Inspired by VisionClaw

Backed by UTD Draper Pitch Competition

OverSite

AI copilot for construction field workers — smart glasses + real-time AI vision that saves hours of documentation and inspection time

RefBib

Drop a PDF, get .bib — extract real BibTeX from academic references with zero AI hallucinations, verified by CrossRef, Semantic Scholar, and DBLP

AR Embedded Visualization & AI Reliance

Studying how AR embedded visualizations affect human reliance on AI in spatial decision-making

Reality Proxy

Decoupling MR interaction from physical constraints via AI-powered abstract proxy representations