Build2026

NoteV

Every AI note-taker can hear. Ours can see — a multimodal AI assistant for Meta Ray-Ban smart glasses that captures and structures your lectures

Smart GlassesAIEdTechiOSSwift

Overview

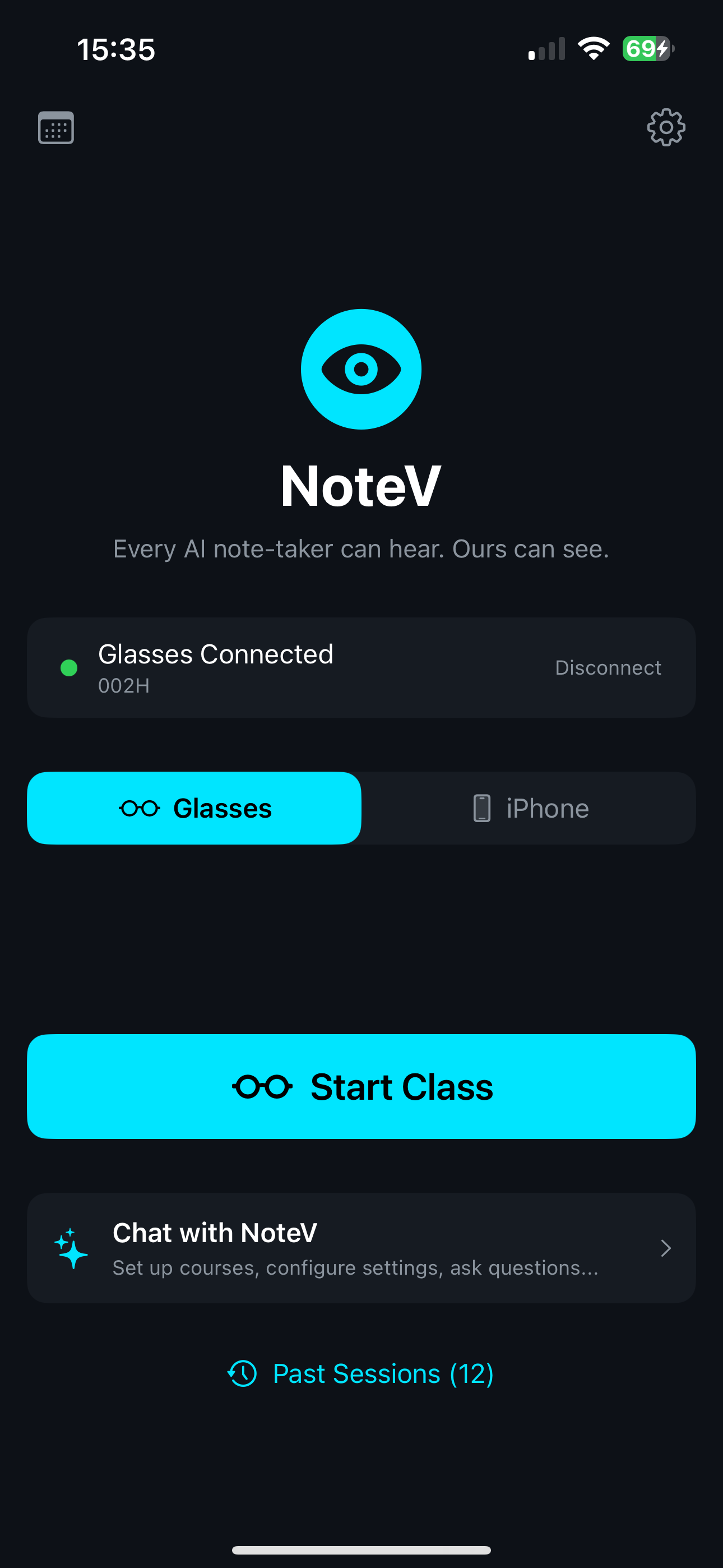

NoteV is an AI classroom assistant designed for Meta Ray-Ban smart glasses that captures audio and visual content during lectures, then generates structured multimodal notes. The system works equally well with just an iPhone camera — no smart glasses required.

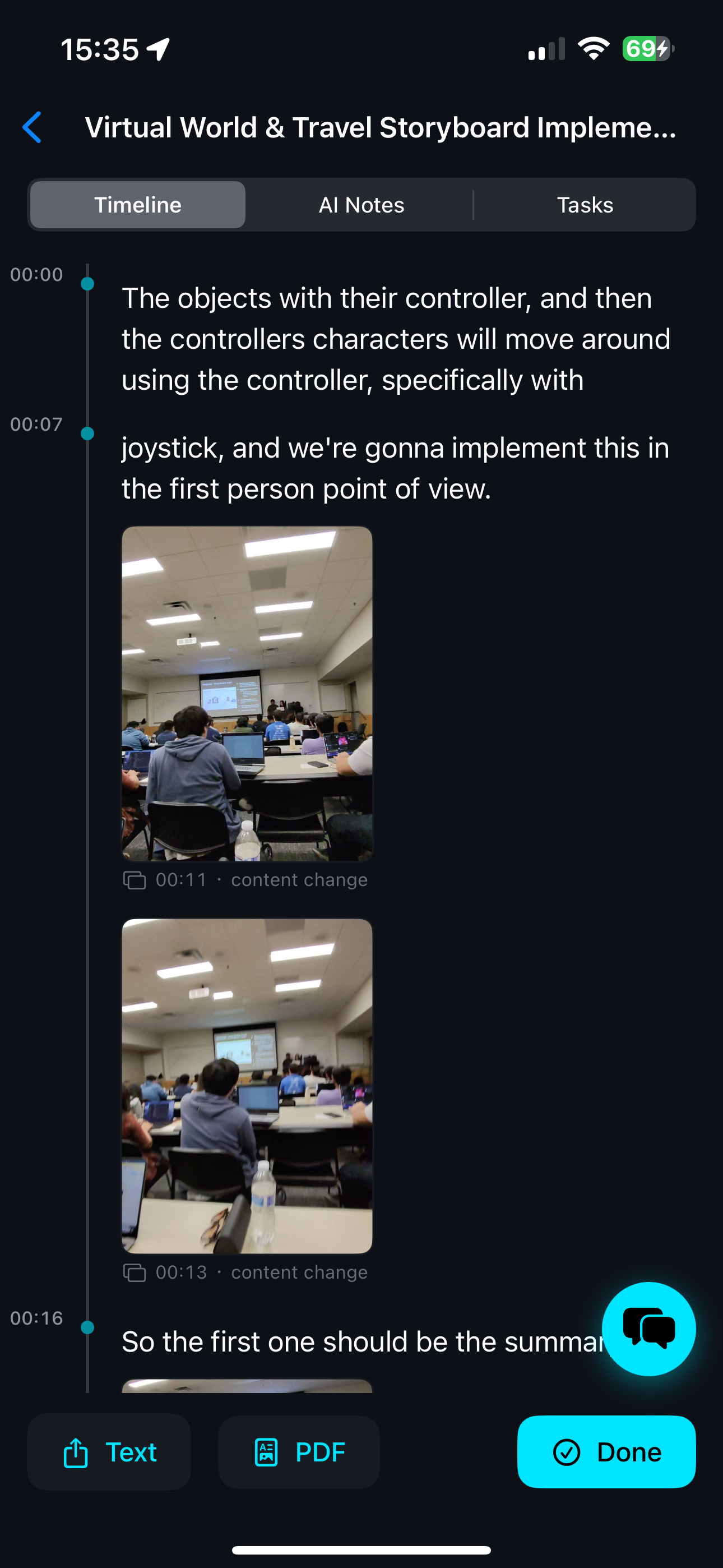

Three-Layer Output

- Polished Timeline — AI-refined transcript with embedded images, highlighted bookmarks, and persistent section headers.

- AI Notes — Structured notes segmented by slide transitions, complete with timestamps and a navigation table of contents.

- Action Items — Extracted tasks with categories, priority levels, and due dates; supports batch export to iOS Reminders and Calendar.

Smart Recording

- Dual Capture Sources — Meta Ray-Ban glasses via DAT SDK or iPhone rear camera with automatic fallback.

- Real-Time Speech Processing — Deepgram WebSocket streaming with connection persistence and graceful disconnection.

- Intelligent Bookmarking — Automatically identifies key moments using a 4-tier keyword taxonomy with confidence metrics.

- Visual Intelligence — 5-second sampling intervals, SSIM change detection, perceptual hashing for slide deduplication.

- Slide Content Analysis — LLM vision extracts slide information (titles, bullet points, equations, diagrams).

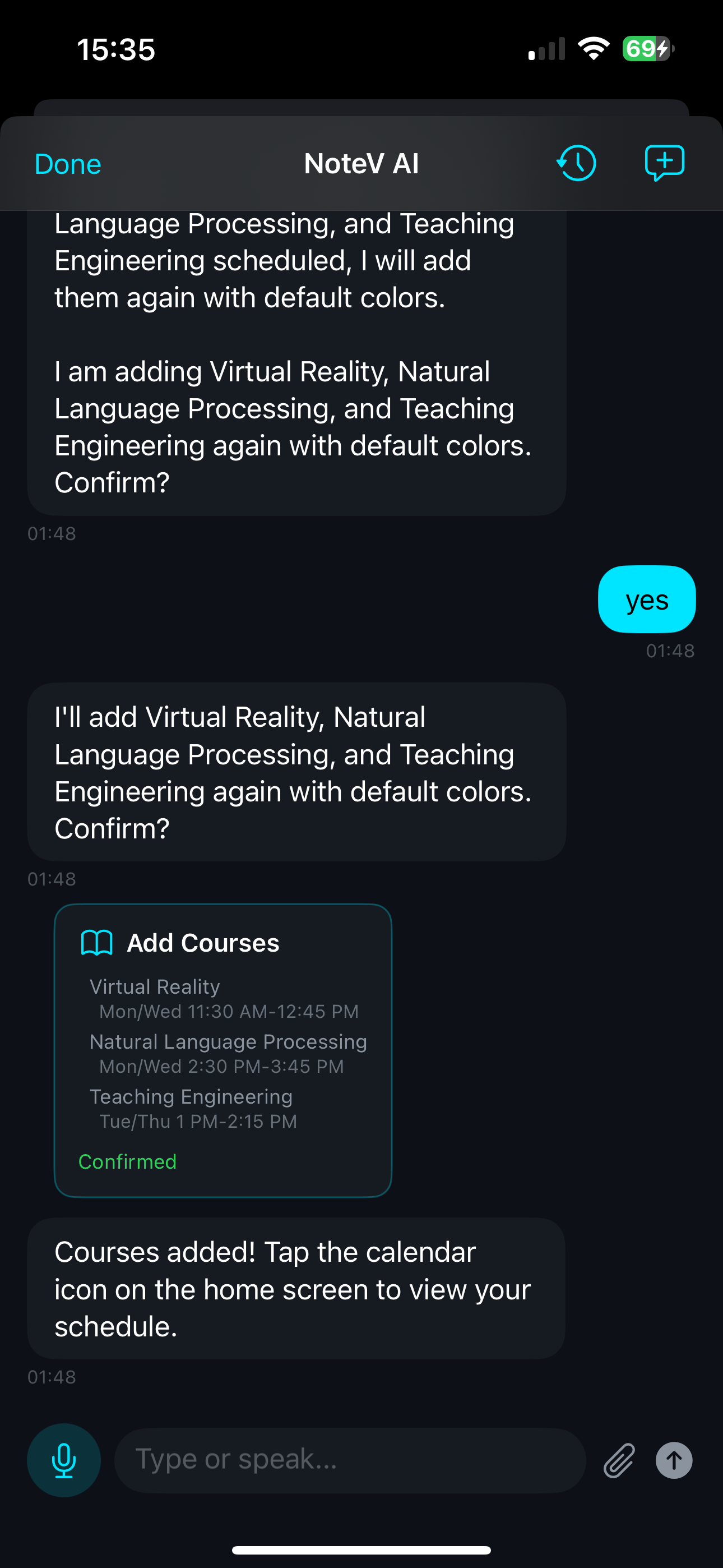

AI Chat

- Unified conversational interface for note queries, course setup, settings, and reminder creation.

- Voice-powered input via Deepgram transcription.

- Interactive action cards for user confirmation on course additions, setting changes, and reminder generation.

- Full access to session transcripts, generated notes, slide images, and task lists.

Architecture

The system is organized into four layers:

- Capture Layer —

CaptureProviderprotocol supporting Meta Ray-Ban DAT SDK v0.4.0 andAVCaptureSessionfor iPhone fallback. - Processing Layer —

AudioPipeline(Deepgram nova-3 primary, Apple Speech fallback),FramePipeline(SSIM + perceptual hash deduplication),SmartBookmarkDetector, andSessionRecorderorchestration. - Generation Layer —

TranscriptPolisher,SlideAnalyzer(LLM vision),NoteGenerator(multimodal LLM), andTodoExtractor. - Presentation Layer — Three output views (Timeline, Notes, Actions), AI Chat interface, and course management dashboard.

Tech Stack

- Platform — iOS 17+, Swift 6, SwiftUI

- Smart Glasses — Meta Ray-Ban Gen-2 (DAT SDK v0.4.0)

- Speech-to-Text — Deepgram nova-3 WebSocket (primary); Apple Speech (fallback)

- LLM — OpenAI GPT-4o, Anthropic Claude, Google Gemini (user-configurable)

- Native APIs — EventKit, PDFKit, Speech, AVFoundation

My Role

Everything — system architecture, multimodal capture pipeline, AI note generation, chat interface, and course management UX. Built entirely through Claude Code.